Data Center Network – Top of Rack (TOR) vs End of Row (EOR) Design

When there are a lot of servers to connect (like in a Data Center), networking needs to be flexible enough to support the compute power required for large installations. Two popular network designs used in such circumstances – TOR (Top of Rack) and EOR (End of Row). Let us look at the advantages and disadvantages of both approaches, in this article.

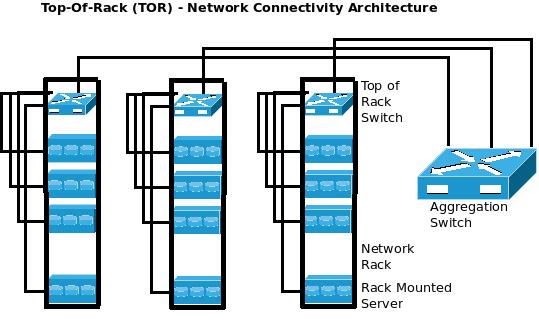

TOR – Top of Rack design:

In a Data Center, there are several racks of servers/ storage equipment. Each rack contains multiple computing devices. The TOR – Top of Rack approach recommends Network Switches to be placed on every rack and all the computing devices present in the rack to be connected to them. In turn, these Network Switches can be connected to Aggregation Switches using one/few cables.

In a Data Center, there are several racks of servers/ storage equipment. Each rack contains multiple computing devices. The TOR – Top of Rack approach recommends Network Switches to be placed on every rack and all the computing devices present in the rack to be connected to them. In turn, these Network Switches can be connected to Aggregation Switches using one/few cables.

Advantages/ Limitations of TOR – Top of Rack approach:

- Cabling complexity is minimized as all the servers are connected to the switch in the same rack and only a few cables go outside the rack.

- Amount of cables required (and their lengths) are lesser as each server does not need to connect to the aggregation switch by itself using a long cable (as in EOR configurations).

- Generally, copper cables are used to connect within the rack and fiber cables are used to connect each TOR switch to the aggregation switch. This design enables expansion, because the network might run at 1GE/ 10GE today and can be upgraded to run on 10GE/ 40GE in future with minimum costs and changes to cabling.

- If the racks are small, there could be one network switch for 2-3 racks.

- TOR architecture supports modular deployment of data-center racks as each rack can come in-built with all the necessary cabling/ switches and can be deployed quickly on-site.

- Since 1U/2U Switches are used in each rack, achieving scalability beyond a certain number of ports would become difficult. Even if more switches are stacked together, they might not have a non-blocking architecture due to their limited backplane connectivity.

- More switches are required in such installations and each switch needs to be managed independently. So, capital and maintenance costs might be higher in TOR deployments.

- There maybe more unused ports in each rack (as the switches have fixed configurations and the number of servers varies) and it is very difficult to accurately provide the required number of ports. This results in higher (un-utilized) ports/ power/ cooling.

- Unplanned Expansions (within a rack) might be difficult to achieve using the TOR approach.

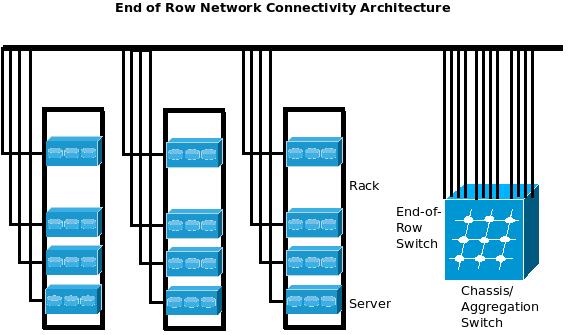

EOR – End of Row design:

In the EOR (End of Row) Network design, each server in individual racks are connected to a common EOR (End of Row) Aggregation Switch directly, without connecting to individual switches in each rack. Obviously, bigger cables are used to connect each server to Chassis based EOR/ Aggregation Switches. There might be multiple such EOR switches in the same data center, one for each row/ certain number of racks.

Advantages/ Limitations of EOR – End of Row approach:

- Since Chassis Switches are deployed in EOR configurations, expansion (for the total number of ports) can be done by just adding a line card as most of the Chassis Switches are planned for expandable configurations, without limiting backplane capacities.

- Chassis Switches enable a High Availability configuration with almost no single points of failure as most of the critical components (control module, cooling module, power supply module, etc) can be deployed in redundant (1+1 or N+1 or N+N) configurations. The fail-over is almost immediate (often without affecting end user sessions).

- Placement of Servers can be decided independently, without any ‘minimum/maximum servers in a single rack’ constraints. So, servers can be placed more evenly across the racks and hence there may not be excessive cooling requirements due to densely placed servers. The EOR architecture would also bring down the number of un-utilized switch ports, drastically.

- The number of switches / number of ports to be managed is lesser in EOR – End of Row deployments. This decreases the capital expenditure, running costs and time needed for maintenance.

- Since each packet has lesser number of switches to pass through, the latency and delay involved in passing through multiple switches is minimized.

- Servers that exchange considerable amount of data packets within themselves can be connected to the same line card in the Chassis switch, irrespective of the rack they belong to. This minimizes delay and enables better performance due to local switching.

- Longer cables are required to connect the Chassi switch (at end of the row) to each server, in EOR deployments and hence special arrangements might be required to carry them over to the aggregation switch. This might result in excessive space utilization at the rack/ data center cabling routes, increasing the amount of data center space required to store the same number of servers.

- The cost of higher performance cables (used in data centers) can be considerable and hence cabling costs can get higher than TOR deployments.

- Its difficult/ more expensive to upgrade cabling infrastructure to support higher speeds/ performance, as lengthier cables need to be replaced individually while upgrading from 1GE to 10GE, for example.

- Fiber cables (which can be upgraded by just changing the optics at either end, without changing the entire cabling infrastructure) cannot be used extensively as many individual servers do not support OFC connectivity.

excITingIP.com

You could stay in touch with the latest computer networking/ enterprise IT technologies by subscribing to this blog with your email address in the sidebar box that says, ‘Get email updates when new articles are published’

I was looking for a really good way to understand how these two deployments looked like and this post just gave me a really good explanation and totally absorved all the information.

Thanks for taking time to do something like this, it was easier than what I thought.

-Kenny

good picture .easy understand

thanks

Thanks,

very good articles

Thanks very much!

Thanks for the precise explaination.

Thanks for explaining it so clearly and succinctly.

Good and simple explanation.

Explained in simple way. Easy to understand.

Rajesh, the pros and cons of the TOR vs EOR are well articulated.

Precise to the point!

Great explanation, thanks for this post!

Thank you

Still being appreciated, thanks!